TL;DR

We embedded a multi-agent AI system directly inside a Ruby backend to translate legal requirements into developer language and assemble defensible audit evidence.

The results:

- Audit time: ~40 hours → 3–4 hours

- Cost per audit: ~$2,000 → $100–$150

- Accuracy: ~90% (spot-checked)

- Annual savings: $500k+ at ~300 audits/year

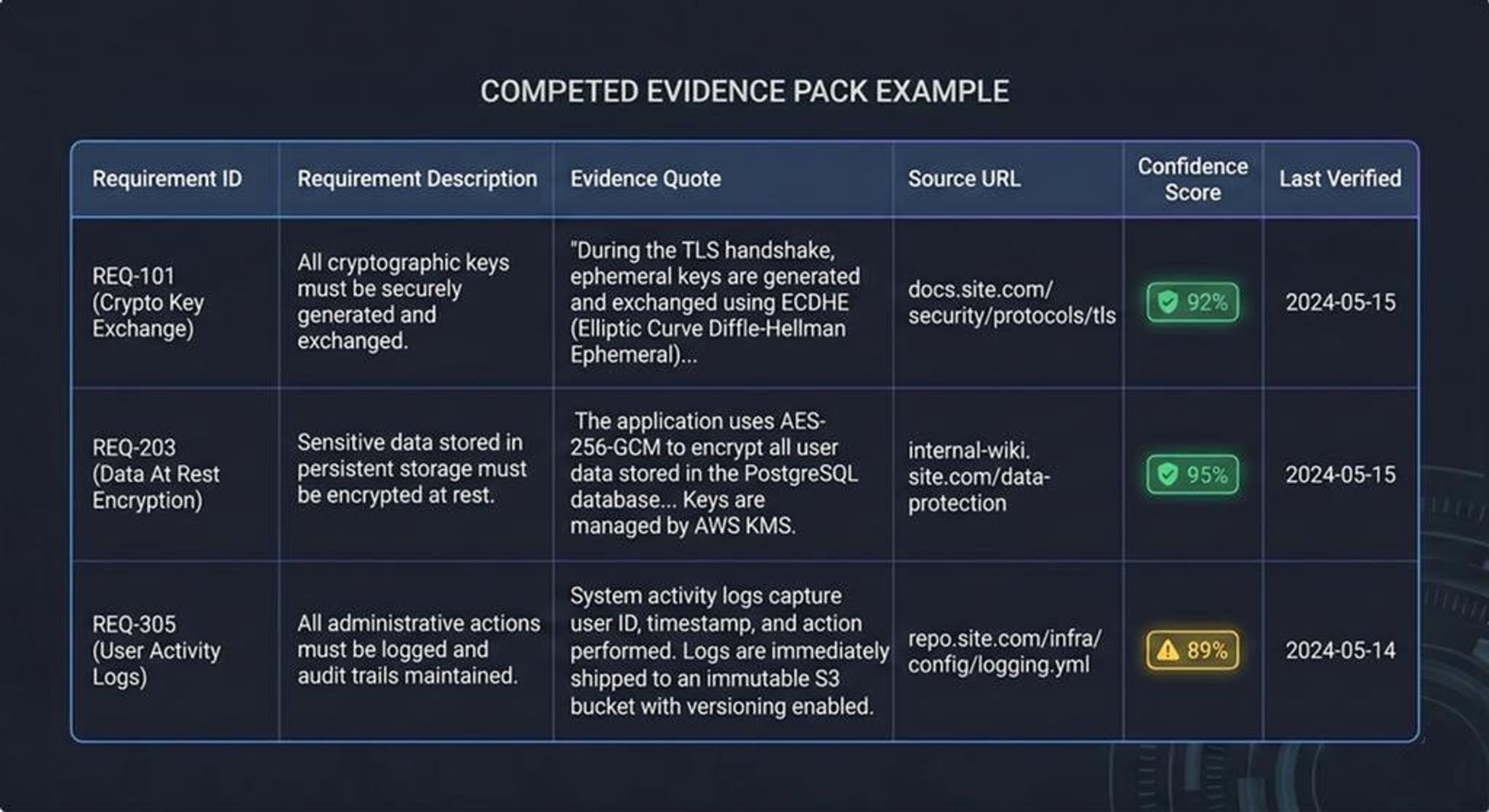

Instead of returning links, the system produces evidence packs with quotes, sources, and confidence scores, ready for legal review.

The Real Problem: Getting Proof, Not Links

Auditors rarely fail because the information doesn’t exist. They fail because finding defensible proof takes forever.

A typical compliance or patent review means digging through documentation, repositories, release notes, and technical guides trying to answer a single question: Is this company actually implementing what its legal documents claim?

And the answer can’t just be a link. Legal reviewers need evidence mapped to specific requirements, with traceable sources and exact passages that support the claim.

But there's a catch.

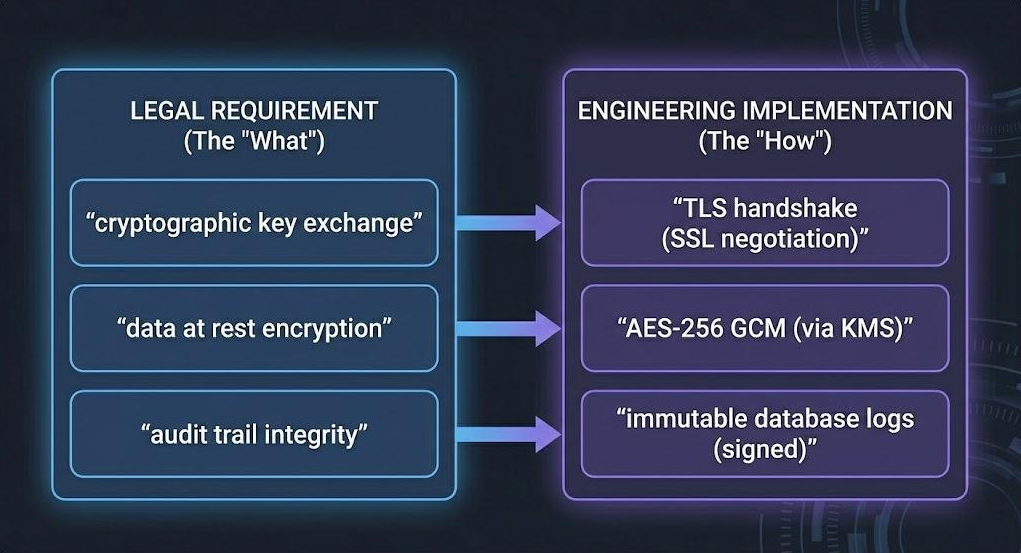

Legal language and implementation language rarely match.

A legal requirement might say: “The system determines cryptographic key exchange status.”

Meanwhile, product documentation might say:

- TLS negotiation

- SSL handshake

- Certificate validation

- HTTPS configuration

Humans can bridge that gap. Search engines usually can’t.

As a result, reviewers were spending ~40 hours per audit manually searching, reading, and mapping evidence.

The goal became clear: Cut time to trusted evidence packs without sacrificing defensibility.

Why “Better Search” Wasn’t Enough

At first glance this looks like a search problem. In reality, it’s a semantic translation problem. Traditional search fails in three ways:

Vocabulary gap

Legal phrasing rarely matches the language used by engineers or documentation.

Precision gap

Finding “HTTPS mentioned somewhere” does not prove compliance with a specific requirement.

Traceability gap

Links without quotes or context slow legal review and make evidence harder to defend.

What auditors actually need is a system that can:

- Translate legal requirements into operational language

- Search across multiple public sources

- Extract passages that support specific criteria

- Assemble those findings into a defensible report

In other words: A system that thinks like an auditor.

Our Solution: The Evidence Detective

We built a system we call The Evidence Detective: a multi-agent workflow embedded directly into the client’s existing Ruby application.

No migration.

No new platform.

No infrastructure rewrite.

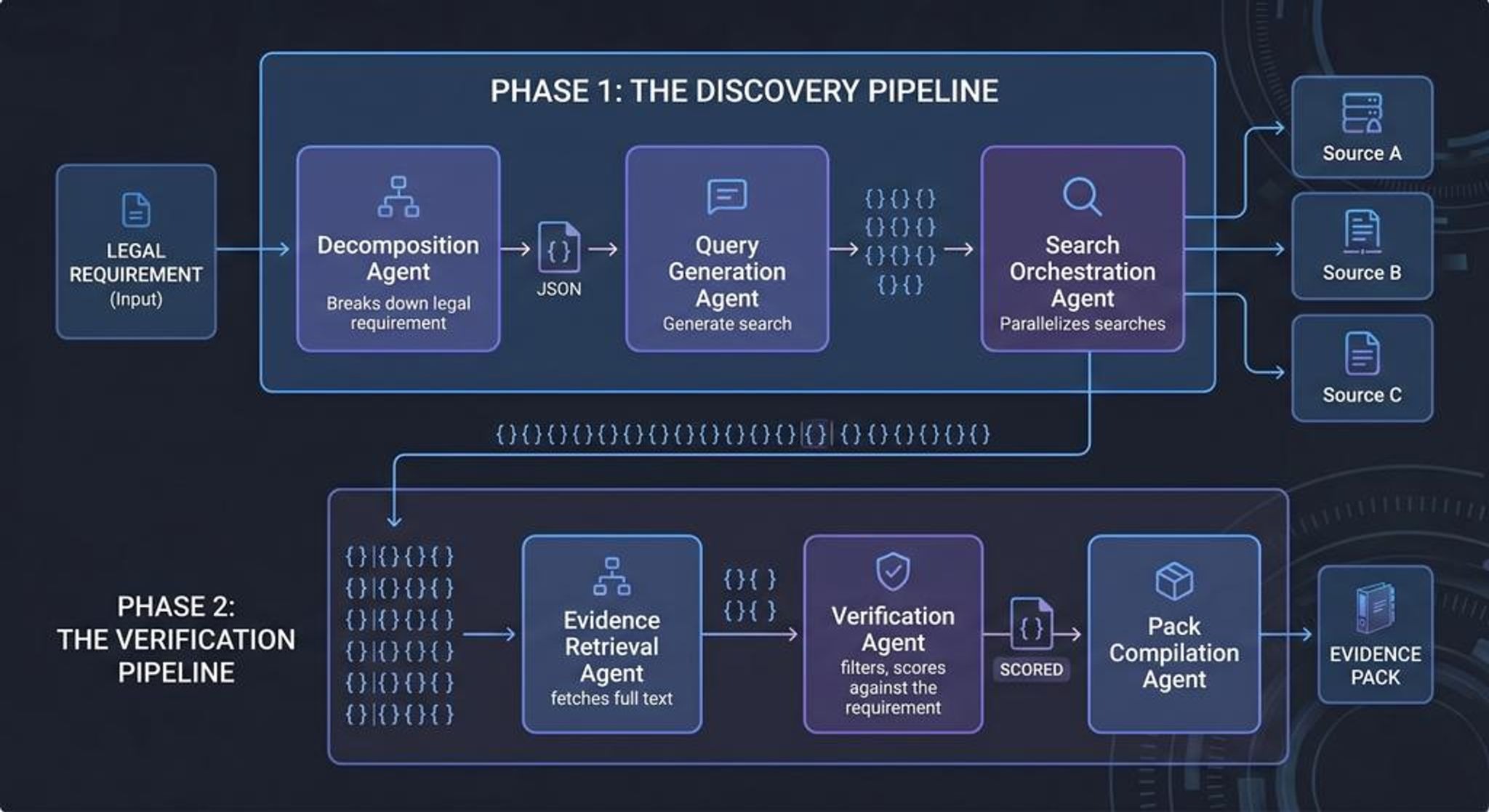

The system orchestrates six specialized AI agents that mirror the reasoning process of expert reviewers.

Each agent performs a focused task and passes structured JSON outputs to the next stage.

This specialization — rather than relying on one large model — is what makes the system both fast and reliable.

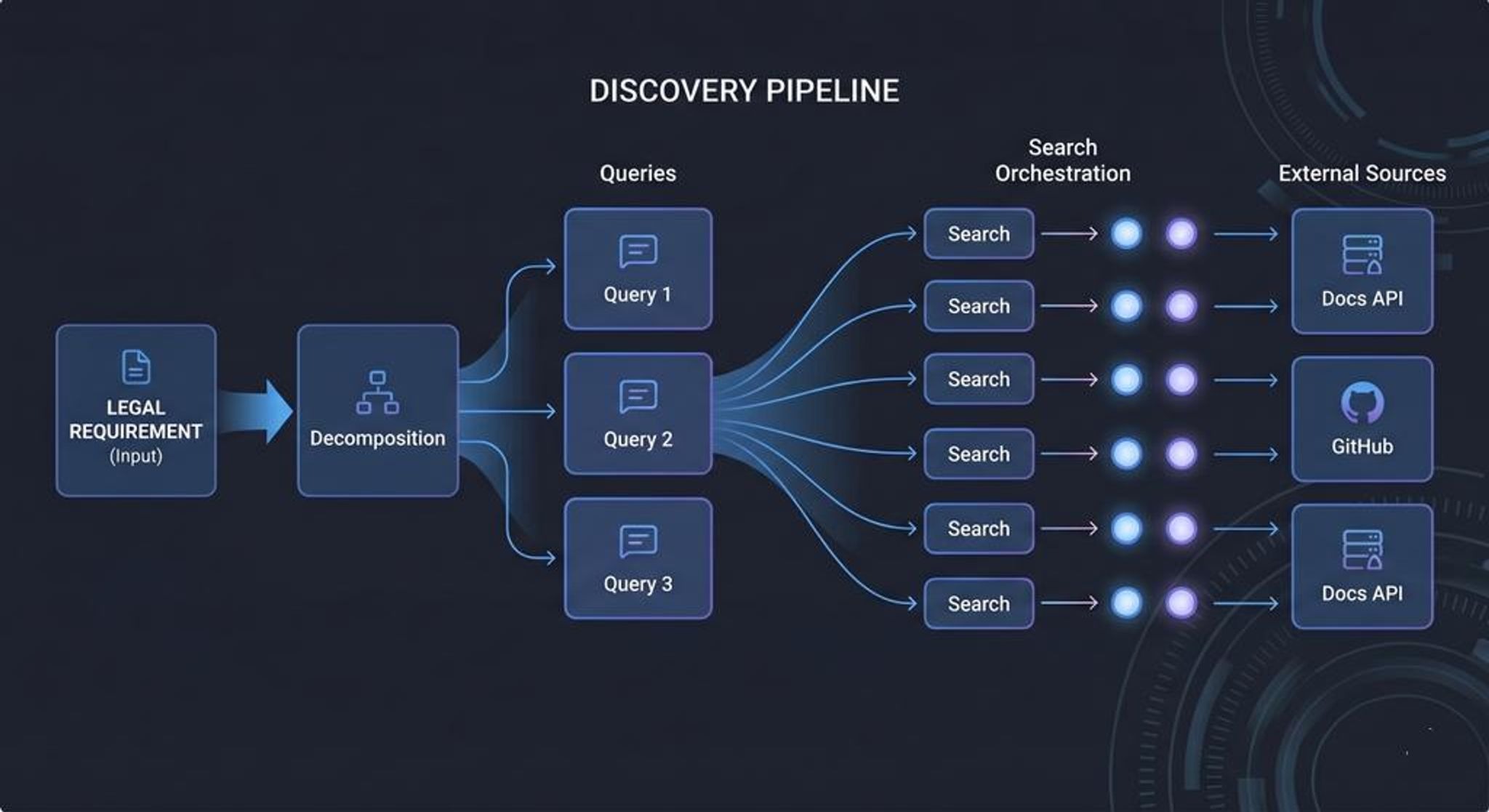

Discovery Pipeline: Finding Relevant Evidence

The first stage focuses on discovering potential evidence.

Element Decomposition Agent

Legal requirements are broken into atomic, testable elements.

Instead of evaluating a document as a whole, the system checks whether each individual requirement can be proven.

Each element becomes a discrete question the system must answer.

Query Generation Agent

For each element, the system generates 10–15 semantic query variations.

These bridge the vocabulary gap between legal language and implementation language.

Example transformation:

Legal phrase

“cryptographic key exchange”

Generated search concepts

- TLS negotiation

- SSL handshake

- Certificate validation

- HTTPS setup

- Secure channel establishment

This dramatically improves recall during search.

Search Orchestration Agent

The system then executes parallel searches across multiple public sources:

- documentation portals

- developer guides

- repositories

- release notes

- technical datasheets

Results are deduplicated and filtered to maintain diverse evidence candidates for downstream analysis.

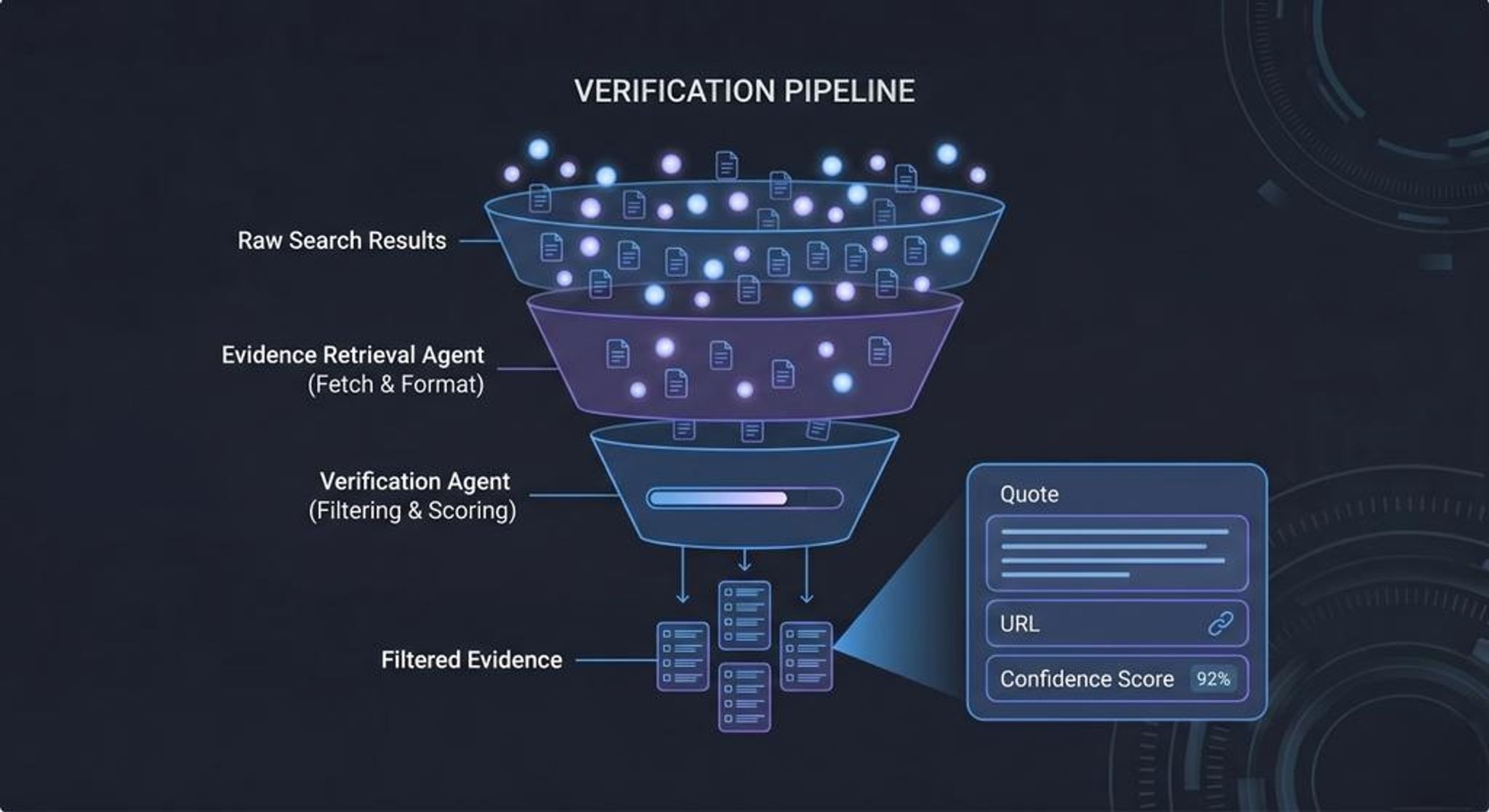

Verification Pipeline: Building the Case

Once potential sources are discovered, the system moves into verification.

This stage converts raw findings into evidence-grade proof.

Evidence Mapping Agent

Relevant passages are extracted and mapped directly to specific elements.

Each piece of evidence includes:

- exact quote

- source URL

- context snippet

- timestamp

No broad matches are allowed.

Every passage must support a specific requirement.

Confidence Scoring Agent

Evidence is evaluated for completeness and quality.

The agent identifies:

- gaps in coverage

- contradictory information

- weak or ambiguous sources

These signals produce a confidence score for each requirement.

Anything uncertain is flagged for human review.

Report Generation Agent

Finally, the system assembles the audit evidence pack.

Each element includes:

Requirement → Supporting quote → Source link → Confidence score

The result is a report that legal teams can immediately use for decision-making.

Everything is traceable and reviewable.

A Concrete Example

Consider a requirement like: “The system determines cryptographic key exchange status.”

The Query Generation Agent expands this into developer terminology:

- TLS handshake

- SSL negotiation

- Certificate verification

- HTTPS configuration

The search agents discover a documentation passage like:

“During the TLS handshake, the server negotiates encryption parameters before establishing the secure connection.”

The Evidence Mapping Agent then ties that passage to the requirement and attaches the source.

The report includes:

- Requirement

- Evidence quote

- Documentation link

- Confidence score

Instead of searching for hours, reviewers receive direct, traceable proof.

Why Agents Instead of One Large Model?

Each agent specializes in a single reasoning task.

- decomposition

- query generation

- search

- evidence extraction

- verification

- reporting

Because each stage outputs structured data, improvements compound.

When the Query Generator learns a better phrase pattern, all future audits benefit.

When the Evidence Mapper detects contradictions, the Confidence Scorer flags them automatically.

This orchestration is what reduces review time by nearly 90%.

Why Ruby Helped, Not Hindered

The entire system runs inside the client’s existing Rails stack.

Agents execute as Sidekiq background jobs, and every stage communicates using strict JSON contracts.

This provided several advantages:

- No migration or platform rewrite

- Predictable parsing between agents

- Full audit logs from day one

- Least-privilege read-only access to sources

The system behaves like any other production service in the application.

Real-World Impact

After deployment, the results were immediate.

Time to evidence pack

~40 hours → 3–4 hours

Cost per audit

~$2,000 → $100–$150

Accuracy

~90% based on independent spot-checks

Annual savings

Over $500,000 at ~300 audits per year

Human reviewers now focus on edge cases and legal judgment, while the system handles discovery, normalization, and evidence mapping.

Why This Agentic Approach Works

Three design principles proved critical.

Specialization beats monoliths

Focused agents outperform a single general model for complex workflows.

Evidence beats links

Every assertion includes a quote, source, and traceable mapping.

Learning compounds

Successful query patterns persist across audits, improving future performance.

Over time, the system becomes better at bridging the vocabulary gap between legal language and technical documentation.

How to Start (A Low-Risk Path)

Teams interested in a similar approach can start small.

- Pick a high-volume audit workflow.

- Define the element schema and acceptance criteria.

- Connect read-only documentation sources.

- Run the system in shadow mode alongside human reviews.

- Compare time, cost, and accuracy against the baseline.

If the system doesn’t outperform the current process, you still gain insight into how the workflow can be improved.

Recent Outcomes

Across industries, similar systems have produced strong results.

Financial services

87% reduction in regulatory review time

Healthcare

95% faster clinical protocol verification

Manufacturing

$2.4M saved on ISO compliance audits

.png)