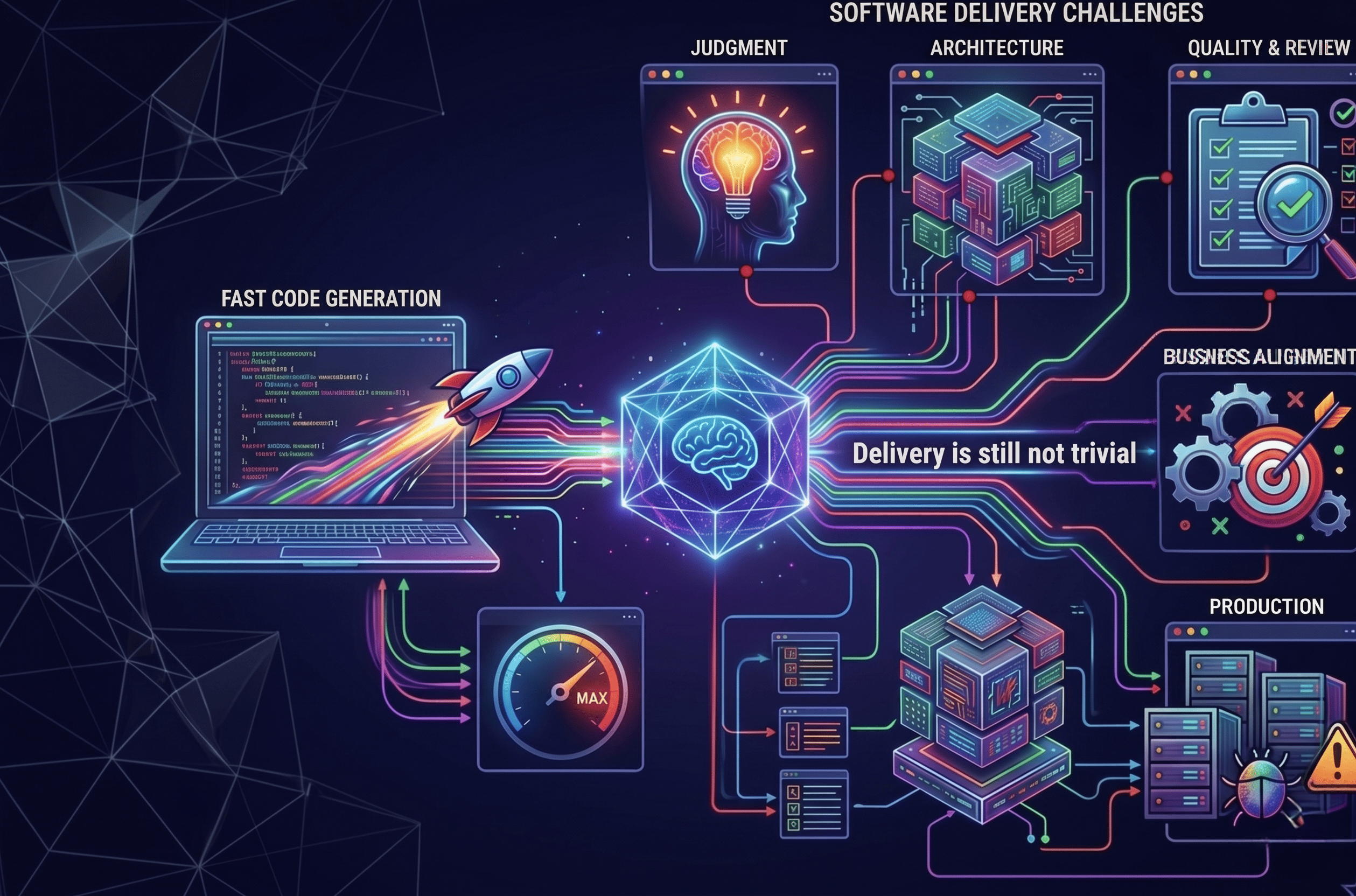

AI has made coding much faster. Software delivery, however, is still far from trivial.

In short

- AI has made coding much faster, but the hardest work has shifted rather than disappeared.

- The biggest constraints now sit more in judgment, architecture, review, quality, and business alignment than in raw implementation alone.

- The teams that win will be the ones that turn AI speed into reliable, scalable delivery.

There’s a question I hear more and more often from founders and product leaders:

“If AI can write so much code now, shouldn’t software be dramatically faster and cheaper to build?”

A year ago, that question sounded premature. Today, it doesn’t.

It’s a fair question. In many ways, the answer is yes.

In my 2025 MBA thesis, I studied how AI adoption was reshaping software consulting and changing the role of the developer. That work combined market signals, expert perspectives, and interviews with technology leaders. One thing became very clear: AI is making software development much faster. Software delivery, though, remains far from trivial. My earlier work on developer demand and AI IDE adoption pointed in the same direction. What has changed since then is not the core conclusion. It is the magnitude.

The important point is simpler than it sounds: coding is now much faster than it used to be, sometimes dramatically so, and pretending otherwise misses what is happening in front of us. The exact multiplier matters less than the direction of change.

By late 2025, the improvement was already hard to ignore. OpenAI presented GPT-5 as its strongest coding model yet, while Anthropic positioned Claude Opus 4 as its best coding model, both showing stronger performance on software engineering tasks that mattered in practice. These models were becoming more useful on real software work, especially in debugging, larger repositories, and more agentic workflows.

At Rootstrap, we felt that shift early. In the 2025 snapshot I shared publicly, AI IDE adoption had already reached 100% across a group of 200 developers, with over 80% reporting productivity gains above 30% and meaningful results across 25+ active projects. Today, in 2026, the picture is more mature: across our roughly 200-engineer organization, AI usage is a given, and the gains are materially larger than what I published back then. That earlier article is still useful as a baseline, but it already understates where things are now.

And yet, building software still doesn’t feel instant.

Software was never just code

One of the biggest misconceptions in the current AI conversation is that software development is mostly about writing code. Code is central, of course.It was never the whole job.

Even before the current wave of AI, the actual work of building software included understanding the business context, interpreting requirements, translating ambiguity into technical decisions, writing and testing code, documenting behavior, reviewing peer work, discussing trade-offs with stakeholders, and collaborating closely with product, design, QA, and operations. That was one of the key starting points in my thesis: a developer’s role is not limited to implementation, but extends across strategic and collaborative activities that help ensure the product is correct, usable, maintainable, and aligned with business goals.

All of those activities are still necessary today. Many of them are also improving with AI, just not at the same pace as raw code generation.

AI can help generate functions, tests, refactors, migrations, queries, and even substantial feature slices. Generating code faster is still very different from delivering the right product. Software delivery still includes choosing what to build, deciding how it should behave in edge cases, integrating it into the existing system, validating that it works under real constraints, and making sure the result survives first contact with users, future engineers, and production environments.

That is why two things can be true at once: AI writes a lot of the code now, and software is still far from trivial to build.

The bottleneck didn’t disappear. It moved

This is the heart of the issue.

If coding is now much faster, why does software still take time?

Because the bottleneck has moved upstream and outward.

A fair question many product and business leaders ask now is: “If we already have a solid PRD, why can’t AI just take it from there?”

A strong PRD absolutely helps. It reduces ambiguity and clarifies scope, yet it still does not eliminate the hardest part of software delivery: turning an intention into a system that works reliably in the real world.

A PRD can describe what the product should do. It usually does not fully resolve how the system should behave in edge cases, how it should integrate with existing tools and data, what trade-offs make sense between speed and maintainability, or which decisions will still look reasonable six months later as the product evolves.

AI can help a lot with implementation. It can generate code, propose structures, write tests, suggest integrations, and accelerate a surprising amount of execution. That is still very different from owning the judgment behind the solution.

Someone still has to decide what matters most, what can be simplified, what needs to be more robust, what should be prioritized now versus later, and how the technical approach should reflect the business goals of the product.

That is what architecture often means in practice. Not just drawing boxes or choosing technologies, but making a long series of decisions about structure, trade-offs, risk, and future change.

And yes, some of that eventually translates into technical choices around integrations, permissions, reliability, state management, testing, deployment, observability, and extensibility. The deeper question is not whether AI can help produce those pieces.It often can. The harder question is whether all of those choices fit together in the right way for this product, this team, and this stage of the business.

That is why a non-technical builder using tools like Claude Code can often generate something impressive and still fall short of what a strong engineering team contributes. The gap is not only in syntax. It is in judgment.

The hard part is often not getting code that runs. It is getting software that behaves correctly, fits the business, evolves cleanly, and does not create hidden debt that slows the company down later.

That is why software still takes time in the age of AI: not because engineers are typing at the same speed as before, but because the real work was never just typing.

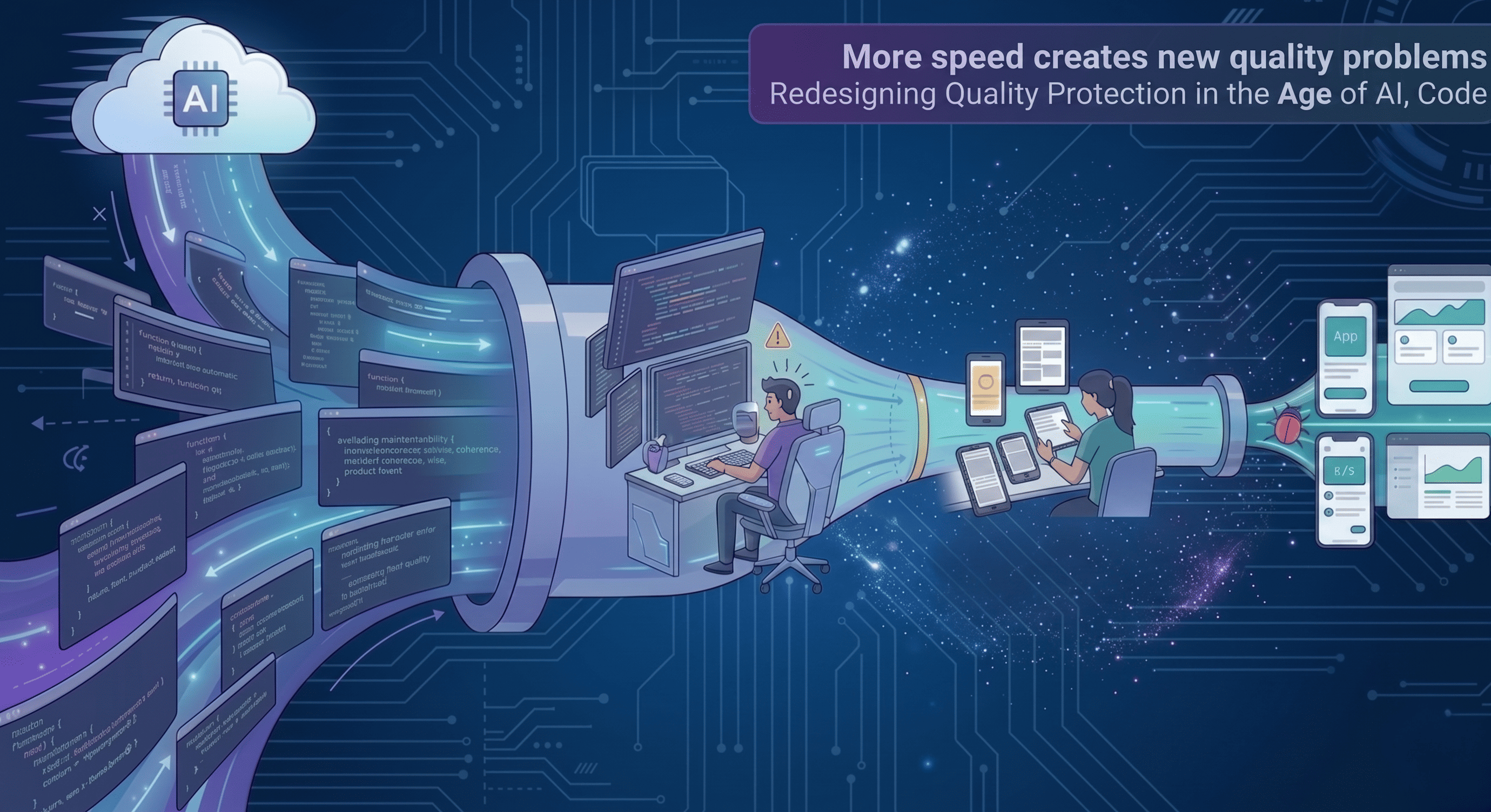

More speed creates new quality problems

There is another reason software still takes time now: faster generation creates new constraints downstream.

One of the clearest examples is code review.

When teams can produce more code in less time, the review system comes under pressure. Pull requests get larger or more frequent. More machine-generated code enters the pipeline. More things look superficially correct. And more decisions have to be evaluated not just for correctness, but for maintainability, coherence, security, regression risk, and product intent.

At Rootstrap, this has become a very real engineering challenge. We already had long-standing public code review guidelines, but the rise of AI-generated output has made it clear that traditional review habits need to evolve. In 2026, one of our engineering goals is precisely to rethink this area, because we now produce more code than before and the old fully human pattern starts to become a bottleneck of its own. Our existing public guide still captures the spirit of what matters in review, including context, business knowledge, trade-offs, and two-reviewer discipline.

That does not mean replacing human review. It means using hybrid systems.

AI is increasingly useful for first-pass review on issues like conventions, duplicated logic, test gaps, simple regressions, and some categories of risk. Humans remain essential for business semantics, architectural fit, edge-case reasoning, prioritization, and deciding whether a change is actually good for the product. Both OpenAI and Anthropic are moving in this direction, making code review and debugging a bigger part of their coding systems. That direction is much closer to augmentation than full substitution.

In other words: AI helps produce more code, but it also forces us to redesign how quality is protected.

That redesign takes work too.

And code review is not the only place where this is showing up. We’re seeing similar pressure in areas like manual QA and in the evolving role of QA testers and QA engineers as code output accelerates. That is also a real topic for us internally, and one we’re actively working through, but it deserves its own piece rather than a detour here.

Technical depth still matters

There is a temptation in the current market to reduce engineering conversations to speed alone.

How fast can you ship it?

How many people do you still need?

How much of this can AI do now?

Those are valid questions. But they are incomplete.

Depending on the purpose of the software, maintainability, scalability, security, observability, testing discipline, and architectural consistency remain extremely important. If a system will support core business operations, handle sensitive workflows, integrate with multiple platforms, or evolve across years and teams, technical depth remains essential, even as code becomes cheaper to generate.

In fact, speed can make bad technical decisions more expensive.

If AI enables teams to generate code much faster, they can also create technical debt much faster. They can spread inconsistent patterns faster. They can lock in poor abstractions faster. They can create the illusion of progress while quietly degrading the health of the system.

That is why architecture remains a deeply human activity. It requires connecting the purpose of the software to the right technical solution. It requires judgment about trade-offs. It requires understanding what can be simple today without becoming fragile tomorrow.

This is also why I continue to find the SPACE useful when thinking about productivity in software. Productivity is not only activity. It also includes performance, communication and collaboration, efficiency and flow, and satisfaction and wellbeing.

In an AI-heavy world, that becomes even more important. Measuring success by raw code volume alone misses the point. The real question is whether faster code generation improves outcomes without degrading quality, clarity, or team effectiveness.

The agency model compounds the gains

There is a meaningful difference between having access to a powerful model and knowing how to systematically turn that power into better outcomes.

That is especially true in a world where the tooling changes constantly. New capabilities, workflows, and agentic patterns keep emerging across vendors, which means the real challenge is no longer access. It is adaptation.

This is one of the underappreciated advantages of building with an agency like Rootstrap. When you work with a team of roughly 200 engineers, you are not just paying for individual execution.

You are benefiting from collective adaptation.

What one engineer learns about AI-assisted testing, another may apply to a legacy refactor. What one team learns about context engineering, another may use in product discovery or API integration. Prompting patterns, repo-aware workflows, MCP usage, AI rules, code review heuristics, and guardrails improve faster when they are shared across dozens of teams working on different products and codebases.

That is one reason AI leverages compounds unevenly: it is not only about model access, but about how quickly practices spread across an organization. The agency model, at its best, lets clients benefit from the fact that many engineers are exploring the edge of these workflows in parallel and cross-pollinating what actually works.

That is also why our journey did not stop at AI IDE adoption.

In the public article I wrote in 2025, I described the next frontier as the rise of AI orchestrators: developers who do not just use AI IDEs, but skillfully combine tools, context, and intent to build faster and with greater impact. That direction has only become more relevant.

In practice, the evolution from chat-based assistance toward agentic workflows, cloud tasks, subagents, and multi-step orchestration is now a major part of how advanced teams work. For clients, that means the advantage is no longer just having engineers who use AI tools. It means working with a team that is continuously learning how to turn those tools into better delivery.

The important point is this: AI tools create the most value when they become part of an operating system for engineering, not just a personal shortcut.

The winners will be the teams that turn speed into reliable outcomes

So where does all of this leave us?

AI is not reducing the importance of engineering. It is changing where engineering creates value.

The winning teams will not be the ones who insist on coding manually. They will be the ones who know how to combine AI speed with judgment, architecture, validation, and business alignment. They will understand how to use models, agents, tools, and review systems without confusing output volume with product progress. They will know when to automate, when to verify, when to escalate to humans, and how to keep the whole system coherent as delivery speeds rise.

For business leaders, this is the key shift to understand.

The right question is no longer, “Do you use AI?” That is quickly becoming table stakes.

The better question is, “Can you turn AI into better delivery without losing quality, clarity, and long-term product health?”

That is a much harder question. But it is the one that matters.

And it is also the reason software still takes time in the age of AI.

Not because coding has not changed. It has.

In the age of AI, speed matters. But judgment is still what turns code into reliable software.

.png)